Getting Started

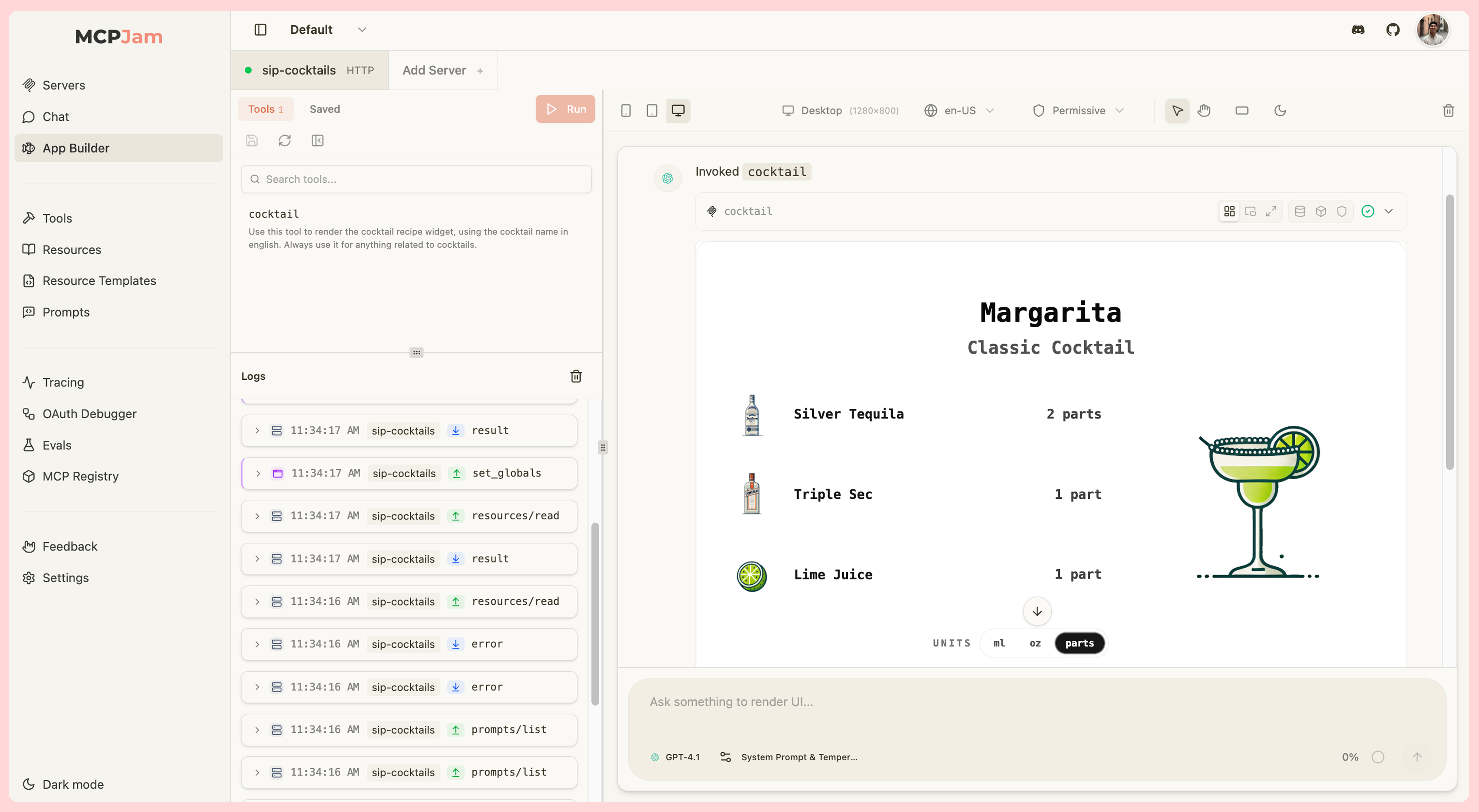

To start building apps with MCPJam:- Connect your MCP server - Use the Servers tab to connect to your MCP server that returns widget-enabled tools

- Navigate to App Builder - Switch to the App Builder tab in the inspector

- Invoke a tool - Either manually invoke a tool from the Tools list, or chat with your server in the playground

openai/outputTemplate for ChatGPT apps or ui.resourceUri for MCP apps), it will render immediately in the widget emulator.

Display Context

Device Viewports

Test your widgets across different device types to ensure responsive design:- Desktop (1280x800) - Standard desktop viewport for full-screen experiences

- Tablet (820x1180) - Medium-sized viewport for tablet devices

- Mobile (430x932) - Small viewport mimicking phone screens

- Custom - Set arbitrary width and height values between 100 and 2560 pixels

Theme Testing

Toggle between light and dark modes to test your widget’s appearance in both themes.Host Styles

Switch between Claude and ChatGPT host styles and chat thread look in each host environment. For MCP Apps that use the MCP Apps style variables, the ChatGPT toggle translates the widget’s styles to ChatGPT’s design tokens.Locale Configuration

Test your app’s internationalization by selecting different locales from the locale selector. Choose from common BCP 47 locales (e.g.,en-US, es-ES, ja-JP, fr-FR) to verify that your widget properly handles different languages and regions.

Content Security Policy (CSP)

The App Builder includes CSP enforcement controls to help you test widget security configurations. Switch between two CSP modes using the shield icon:- Permissive (default) - Allows all HTTPS resources, suitable for development

- Strict - Only allows domains declared in your widget’s CSP metadata (

openai/widgetCSPfor ChatGPT Apps,ui.cspfor MCP Apps)

Device Capabilities

Configure device-specific capabilities to test different interaction patterns:- Hover - Enable/disable hover support to test mouse-based interactions vs touch-only interfaces

- Touch - Toggle touch input to simulate mobile and tablet devices

Safe Area Insets

Simulate device notches, rounded corners, and gesture areas with configurable safe area insets:- Preset Profiles - Quick access to common device configurations:

- None (0px)

- iPhone with Notch (44px top, 34px bottom)

- iPhone with Dynamic Island (59px top, 34px bottom)

- Android gesture navigation (24px top, 16px bottom)

- Custom Values - Manually adjust top, bottom, left, and right insets

Timezone

Select a timezone from the toolbar to test time-aware widgets. The selector includes 19 IANA timezones covering all major regions, plusUTC.

The timezone selector is only available for MCP Apps (SEP-1865).

Widget Controls

Protocol Selector

When an MCP App includes ChatGPT compatibility metadata (openai/outputTemplate alongside ui.resourceUri), a protocol toggle appears in the left panel header. This lets you switch which host bridge the emulator uses to render the widget:

- ChatGPT Apps - Renders using the

window.openaihost bridge - MCP Apps - Renders using the

ui/*JSON-RPC host bridge

Display Modes

The App Builder supports all three display modes for both ChatGPT Apps and MCP Apps:- Inline (default) - Widget renders within the chat message flow

- Picture-in-Picture - Widget floats at the top of the screen, staying visible while scrolling

- Fullscreen - Widget expands to fill the entire viewport for immersive experiences

Debugging Tools

Each tool result in the chat thread has a row of icons in its header. Click any icon to toggle the corresponding debug panel below the widget.Data

Inspect the raw tool input, output, and error details for each tool call.Widget State (ChatGPT Apps only)

View the currentwidgetState object and see when it was last updated. This is the state your widget sets via window.openai.setWidgetState that gets passed back to the model.

Model Context (MCP Apps only)

View the context your widget has sent back to the model viaui/update-model-context. This panel only appears when the widget has set model context.

CSP Debugging

When a widget violates CSP rules in strict mode, you’ll see a badge showing the number of blocked requests. The CSP debug tab shows:- Suggested fix - Copyable JSON snippet to add to your

openai/widgetCSPorui.cspfield - Blocked requests - List of CSP violations with the directive and source that was blocked

- Declared domains - The connect, resource, frame, and base URI domains your widget currently declares

Save View

Save a snapshot of the tool execution to the Views tab for later browsing, editing, and sharing.JSON-RPC Logging

All communication between the inspector and your MCP server is logged in real-time. The logger panel is embedded in the bottom of the left panel, below the tools list. Each log entry shows the direction (client-to-server or server-to-client), the method name, and a timestamp. Logged messages include:- Tool invocation requests and responses

window.openaiAPI calls from your widget- Widget state updates

- JSON-RPC message types for MCP Apps (

tools/call,ui/initialize,ui/message, etc.)

Chat Controls

Model Selector

Choose which LLM model to use for the chat conversation. The selector supports models from multiple providers, including Anthropic and OpenAI. You can also bring your own API key to use custom providers.System Prompt & Temperature

Configure the system prompt and temperature for the LLM. Click the settings icon to open a dialog where you can edit the system prompt text and adjust the temperature slider.Tool Approval

Toggle tool approval to require manual confirmation before the LLM executes any tool call. When enabled, each tool invocation pauses and waits for your approval before running.X-Ray

X-Ray lets you inspect the exact payload that would be sent to the AI model. This is useful for debugging tool schemas, verifying system prompts, and understanding what the LLM actually sees when processing a conversation. Click the scan-search icon in the chat input toolbar to open X-Ray. The chat thread is replaced with a read-only JSON viewer showing three top-level fields:system- The full system prompt, including appended skill-tool instructionstools- A map of every available tool with its name, description, and JSON Schema input definitionmessages- The current conversation history as it would be sent to the model