Setup

Set your MCPJam API key as an environment variable:EvalTest and EvalSuite will auto-save results when this key is available.

Auto-Save from EvalTest

WhenMCPJAM_API_KEY is set, EvalTest.run() automatically saves results:

Auto-Save from EvalSuite

Suites can be configured at construction or run time:EvalTest auto-saves are suppressed to avoid duplicate uploads. The suite consolidates all test results into a single run.

Manual Save APIs

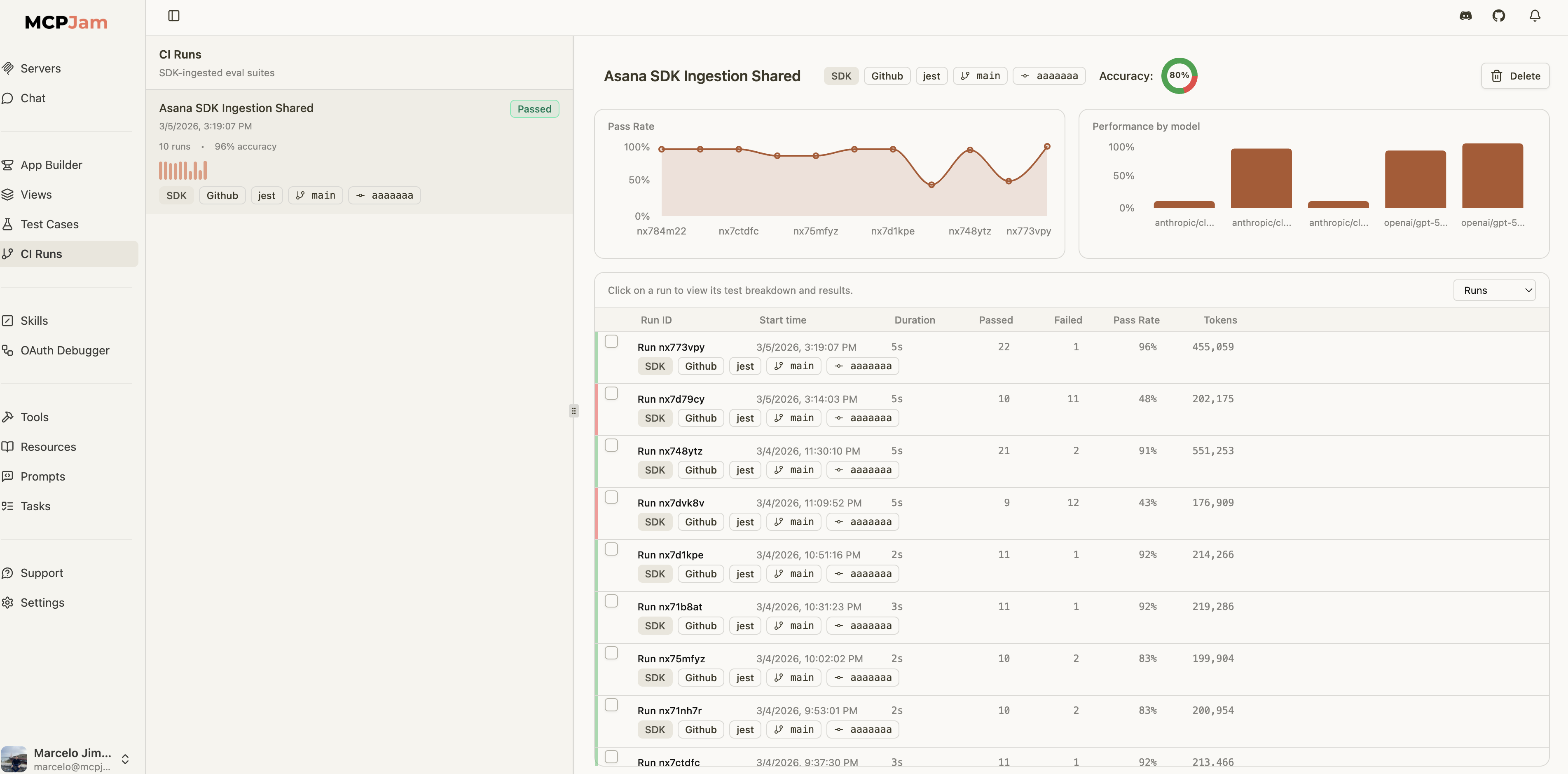

For more control — custom test runners, CI post-steps, or framework-agnostic flows — the SDK provides dedicated APIs:CI Metadata

Attach CI/CD context to your eval runs for traceability in the dashboard: